Secure Your Cloud ML: Unmasking Adversarial AI Data Attacks

Poisoned Pipelines: Unmasking the Invisible Threat of Adversarial AI Data Attacks in Cloud ML Environments

The promise of Artificial Intelligence continues to reshape industries, driving unprecedented innovation and efficiency. From autonomous vehicles navigating complex urban landscapes to sophisticated fraud detection systems safeguarding financial transactions, AI models are increasingly entrusted with critical decision-making across virtually every sector. Yet, beneath this veneer of technological progress lies a growing, insidious threat: adversarial AI data attacks. These aren’t your typical cyber intrusions; they represent a fundamental challenge to the integrity and reliability of AI systems, particularly within the dynamic, interconnected landscape of cloud machine learning (ML) environments.

As organizations accelerate their adoption of cloud-native ML pipelines, the attack surface expands dramatically. Data, the lifeblood of any AI model, becomes a prime vector for malicious actors seeking to corrupt models, introduce subtle backdoors, or degrade performance with devastating consequences. This post delves deep into the mechanisms, impacts, and essential countermeasures against adversarial AI data attacks, equipping senior technical leaders and ML practitioners with the knowledge to fortify their defenses.

Table of Contents

- The Ubiquitous Rise of AI and Its Inherited Vulnerabilities

- Understanding Adversarial AI Data Attacks

- The Anatomy of a Poisoned Pipeline: Attack Vectors and Methods

- The Devastating Impact: Beyond Model Performance Degradation

- Fortifying Your Cloud ML Defenses: A Multi-Layered Approach

- Frequently Asked Questions

- Conclusion: Securing the Future of AI

The Ubiquitous Rise of AI and Its Inherited Vulnerabilities

Artificial Intelligence is no longer a futuristic concept; it is an intrinsic component of modern enterprise infrastructure. From optimizing supply chains and personalizing customer experiences to powering critical infrastructure and national security systems, AI’s footprint is expanding rapidly. This pervasive integration underscores an urgent need to address its inherent vulnerabilities, particularly as these systems move into dynamic cloud environments.

Unlike traditional software, where vulnerabilities often reside in code logic or configuration, AI systems introduce a new attack surface: the data itself. The integrity of an AI model is inextricably linked to the quality and trustworthiness of the data it learns from. Any compromise at the data layer can propagate throughout the entire ML pipeline, leading to unpredictable, erroneous, or even malicious behavior.

Understanding Adversarial AI Data Attacks

The concept of adversarial attacks on AI has gained significant traction, highlighting a sophisticated new frontier in cybersecurity. These attacks specifically target the unique characteristics of machine learning models.

What are Adversarial AI Data Attacks?

Adversarial AI data attacks involve the intentional and malicious manipulation of data used to train, validate, or operate machine learning models. The primary goal is to subvert the model’s intended function, forcing it to make incorrect predictions, exhibit biased behavior, or even introduce hidden backdoors that can be exploited later. These attacks leverage the statistical nature of ML algorithms, exploiting their sensitivity to data patterns.

Unlike traditional cyberattacks that might aim to steal data or disrupt services, adversarial data attacks seek to corrupt the intelligence of the system. They target the very foundation upon which AI decisions are made. This makes them particularly insidious, as the model may appear to function normally while harboring critical flaws.

The Two Faces of Data Attacks: Poisoning vs. Evasion

While often discussed together, it’s crucial to distinguish between two primary categories of adversarial data attacks: poisoning and evasion. Both manipulate data, but they do so at different stages of the ML lifecycle.

Poisoning Attacks

Poisoning attacks occur during the training phase of an ML model. Malicious actors inject carefully crafted, corrupted data into the training dataset, influencing the model’s learning process. The objective is to subtly alter the model’s parameters and decision boundaries, leading to degraded performance, biased outcomes, or the creation of specific backdoors.

Consider a spam filter: a poisoning attack might involve introducing numerous legitimate emails with subtle, consistent features that, when present, cause the model to incorrectly classify them as spam. Conversely, it could train the model to classify actual spam as legitimate, allowing malicious content to bypass defenses.

There are several types of poisoning attacks:

* Label Manipulation: An attacker changes the labels of a small fraction of training data. For instance, classifying images of stop signs as speed limit signs, teaching an autonomous vehicle to misinterpret them.

* Feature Injection: Malicious features are subtly introduced into training samples. This could involve embedding specific, nearly imperceptible patterns into images or text that, when present, trigger a specific, incorrect classification by the model. This is often used to create a “backdoor” where the model behaves normally until a specific trigger is present.

The insidious nature of poisoning lies in its “invisible” impact; the model may perform well on general test sets, but fail catastrophically under specific, targeted conditions.

Evasion Attacks

Evasion attacks, in contrast, occur during the inference phase when a model is already deployed. Here, the attacker crafts adversarial examples – slightly perturbed inputs – that are imperceptible to humans but cause a trained model to misclassify them. For example, adding imperceptible noise to an image of a cat might cause a vision model to classify it as a dog.

While highly effective and well-researched, evasion attacks differ from poisoning in that they don’t corrupt the model’s core learning. Instead, they exploit the model’s inherent sensitivities to make it misbehave on specific, crafted inputs. Our primary focus here, “poisoned pipelines,” centers on the foundational corruption of models via data poisoning during the training and retraining processes.

Why Cloud ML Environments are Prime Targets

Cloud ML environments, while offering unparalleled scalability, flexibility, and cost-efficiency, also present unique vulnerabilities that make them particularly susceptible to adversarial AI data attacks.

- Distributed Data Sources: Cloud ML projects often aggregate data from diverse sources: APIs, IoT devices, web scrapes, third-party datasets, and internal databases. Each ingestion point is a potential vector for malicious data injection.

- Complex Supply Chains: The modern ML pipeline is rarely self-contained. It often relies on pre-trained models, transfer learning, and shared datasets from external providers. Trusting these external components without rigorous validation introduces significant risk.

- Automated and Continuous Learning: Many cloud ML systems are designed for continuous integration/continuous deployment (CI/CD) and online learning, where models are regularly updated with new data. This automation, while efficient, can inadvertently perpetuate and amplify poisoned data, rapidly propagating errors throughout the system.

- Shared Infrastructure and APIs: Multi-tenant cloud environments and extensive API integrations can create broader attack surfaces. A compromise in one part of a connected ecosystem could allow an attacker to inject poisoned data into another.

- Lack of Visibility and Control: The abstraction layers inherent in cloud services, while simplifying deployment, can sometimes obscure the underlying data flows and processing, making it harder to detect subtle data anomalies or malicious injections.

The Anatomy of a Poisoned Pipeline: Attack Vectors and Methods

Understanding where and how adversaries can inject poisoned data is crucial for building robust defenses. The entire ML pipeline, from data acquisition to model deployment, presents potential vulnerabilities.

Data Ingestion Points

The earliest stage of the pipeline, where raw data enters the system, is a critical vulnerability.

* Public APIs and Web Scraping: If an ML model relies on data from public APIs or web scraping, an attacker could manipulate the external source to feed poisoned data. For example, injecting malicious reviews into a product recommendation system.

* IoT Sensor Feeds: In industrial or smart city applications, compromised IoT devices could transmit erroneous or crafted sensor readings, polluting the training data for predictive maintenance or traffic management models.

* Manual Uploads and Third-Party Data: Data scientists or engineers might inadvertently upload compromised datasets obtained from untrusted sources, or an insider threat could deliberately introduce malicious files.

Data Preprocessing and Feature Engineering

Even if raw data is clean, the subsequent stages of transformation can be exploited.

* Feature Engineering Manipulation: An attacker could target the scripts or processes that generate features from raw data, introducing subtle biases or patterns. For example, a feature scaling process could be tampered with to amplify specific, malicious data points.

* Data Cleaning Anomalies: Automated data cleaning routines, if not robustly designed, can be tricked into “cleaning” away legitimate data while retaining or even amplifying poisoned entries that mimic normal noise.

* Data Augmentation Exploits: Techniques like image rotation or noise addition for data augmentation could be manipulated to introduce specific adversarial patterns across a larger dataset.

Model Training and Retraining

The core learning phase is directly impacted by poisoned data.

* Initial Training Contamination: If the initial training dataset is compromised, the foundational model will be inherently flawed, potentially carrying a backdoor from its inception.

* Continuous Learning Pipelines: Systems that constantly update models with new incoming data are highly vulnerable. A steady stream of poisoned data can gradually shift the model’s decision boundaries over time, making the attack harder to pinpoint to a single event.

* Federated Learning Risks: In federated learning, where models are trained on decentralized datasets and only model updates are shared, a malicious client could submit poisoned model updates, corrupting the global model.

Third-Party Data and Models

The increasing reliance on external resources introduces supply chain risks.

* Pre-trained Models: Using pre-trained models from public repositories or third-party vendors without thorough vetting can introduce vulnerabilities. These models might have been intentionally poisoned during their initial training.

* Shared Datasets: Leveraging publicly available or commercially acquired datasets requires extreme caution. An attacker could compromise these datasets at their source or during distribution.

* MLaaS (Machine Learning as a Service) Platforms: While cloud providers offer secure environments, the user’s responsibility extends to the data they feed into these platforms. Poor data governance on the user’s end can still lead to poisoned outcomes.

The Devastating Impact: Beyond Model Performance Degradation

The consequences of adversarial AI data attacks extend far beyond mere inaccuracies or reduced model performance. They can inflict severe, multi-faceted damage on an organization.

Financial Losses

Poisoned models can directly lead to significant financial repercussions.

* Fraud and Incorrect Decisions: A compromised fraud detection system might approve fraudulent transactions or falsely flag legitimate ones, leading to direct financial losses or customer attrition.

* Operational Disruption: In industrial settings, a poisoned predictive maintenance model could cause unnecessary shutdowns or equipment failures, incurring costly downtime and repairs.

* Market Manipulation: In finance, a poisoned algorithmic trading model could be manipulated to make disadvantageous trades, leading to substantial market losses.

Reputational Damage

Trust is paramount, and a poisoned AI system can erode it quickly.

* Loss of Customer Trust: If an AI-powered service consistently provides incorrect recommendations, biased results, or fails to protect user data, customers will lose faith in the brand.

* Public Scrutiny: High-profile failures due to AI vulnerabilities can attract negative media attention, regulatory investigations, and public backlash, severely damaging an organization’s reputation.

* Brand Erosion: A reputation for unreliable or insecure AI can make it difficult to attract talent, partners, and investment in the long term.

Security and Safety Risks

In critical applications, the stakes are even higher.

* Autonomous Systems: Poisoning attacks on autonomous vehicles could lead to misinterpretations of road signs or obstacles, potentially causing accidents and endangering lives.

* Medical Diagnostics: A compromised AI diagnostic tool might misclassify diseases, leading to incorrect treatments or delayed interventions, with life-threatening consequences.

* Critical Infrastructure: AI systems managing power grids, water treatment, or communication networks, if poisoned, could be manipulated to cause widespread outages or system failures.

* Backdoor Activation: The most insidious form of poisoning creates a backdoor. The model performs normally until a specific, hidden trigger is present in the input, at which point it executes a pre-programmed malicious behavior, often with severe security implications.

Regulatory and Compliance Violations

The increasing regulatory landscape around AI imposes significant responsibilities.

* GDPR and Data Privacy: Poisoned data can lead to privacy breaches or the amplification of biases, violating regulations like GDPR or CCPA and incurring hefty fines.

* Industry-Specific Regulations: Sectors like finance, healthcare, and defense have strict compliance requirements. A compromised AI system could lead to non-compliance, legal action, and loss of operating licenses.

* Ethical AI Guidelines: Poisoning can introduce or exacerbate algorithmic bias, going against ethical AI principles and potentially leading to discriminatory outcomes.

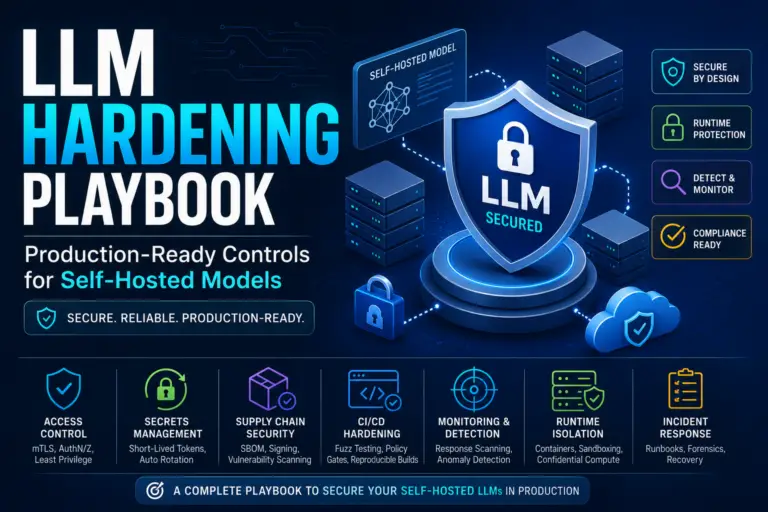

Fortifying Your Cloud ML Defenses: A Multi-Layered Approach

Protecting against adversarial AI data attacks requires a comprehensive, multi-layered security strategy that spans the entire ML lifecycle. It demands a shift from traditional cybersecurity thinking to one that specifically addresses the unique vulnerabilities of data-driven systems.

Robust Data Validation and Sanitization

The first line of defense is ensuring the integrity of your data inputs.

* Pre-processing Filters: Implement strong filters at all data ingestion points to detect and block suspicious data patterns, out-of-range values, or known adversarial examples.

* Anomaly Detection: Employ statistical and ML-based anomaly detection techniques on incoming data streams to identify outliers that might indicate poisoning attempts.

* Data Provenance and Lineage Tracking: Maintain detailed records of data origin, transformations, and access. This allows for auditing and rollback if poisoned data is discovered.

* Schema Validation and Constraints: Enforce strict data schemas and constraints to ensure data conforms to expected formats and ranges.

Secure Data Pipelines and Access Controls

Protecting the movement and storage of data is paramount.

* Zero-Trust Principles: Apply zero-trust principles to all data access, verifying every user and device, and granting least-privilege access.

* Granular Permissions: Implement fine-grained access controls to data stores and ML pipeline components, ensuring only authorized personnel and services can read, write, or modify data.

* Encryption in Transit and At Rest: Encrypt all data, both when it’s being transmitted across networks and when it’s stored in cloud databases or object storage.

* Auditing and Logging: Maintain comprehensive logs of all data access, modifications, and model training events. Regularly review these logs for suspicious activity.

Model Robustness and Adversarial Training

Strengthening the models themselves against adversarial inputs is a proactive measure.

* Adversarial Training: Train models on a mix of legitimate and adversarial examples. This helps the model learn to recognize and be resilient to subtle perturbations.

* Defensive Distillation: A technique where a model is trained on the outputs of another model (a “teacher” model), making the student model less sensitive to adversarial perturbations.

* Certified Robustness: Explore methods that mathematically prove a model’s robustness within certain bounds, although these are often computationally expensive and limited to specific model types.

* Ensemble Methods: Using multiple models (an ensemble) to make predictions can sometimes improve robustness, as an attacker would need to trick several models simultaneously.

Continuous Monitoring and Anomaly Detection

Early detection is key to mitigating damage from poisoned pipelines.

* Data Drift Monitoring: Continuously monitor incoming production data for significant shifts in distribution or characteristics, which could indicate a poisoning attack.

* Model Performance Monitoring: Track key performance metrics (accuracy, precision, recall) of deployed models. Sudden or unexpected degradation can signal a problem.

* Outlier Detection in Training Data: Implement post-training analysis to identify influential outliers in the training data that disproportionately affect model weights, potentially revealing poisoned samples.

* Explainable AI (XAI) for Post-Incident Analysis: Utilize XAI techniques to understand why a model made a specific decision. This can help pinpoint the impact of poisoned data and debug anomalies.

Secure Software Development Lifecycle (SSDLC) for ML

Integrate security throughout the entire ML development process.

* Threat Modeling for ML Systems: Conduct specific threat modeling exercises for ML pipelines to identify potential attack vectors unique to data and model vulnerabilities.

* Regular Security Audits and Penetration Testing: Periodically audit ML pipelines, data stores, and models for vulnerabilities. Conduct penetration tests that specifically simulate adversarial AI attacks.

* Training for ML Engineers and Data Scientists: Educate your ML teams on adversarial AI threats, secure coding practices, and data hygiene best practices.

* Version Control for Data and Models: Implement robust version control for both datasets and trained models, allowing for rollbacks to previous, untainted versions if a compromise is detected.

Frequently Asked Questions

Q1: What’s the fundamental difference between data poisoning and data leakage?

A1: Data poisoning involves the malicious injection or manipulation of data into a training set to corrupt a model’s behavior. Data leakage, on the other hand, refers to the unintentional exposure of sensitive or private data, often through misconfigured systems or lax security practices. While both are data-related security issues, poisoning is an active attack against model integrity, while leakage is a breach of data confidentiality.

Q2: Can open-source models be poisoned, and how can I protect against it?

A2: Yes, absolutely. Open-source models, especially those from untrusted or unverified sources, are highly susceptible to poisoning. An attacker could pre-train a model with poisoned data and release it, or even contribute poisoned data to a collaborative dataset. Protection involves rigorous vetting of all third-party models and datasets, including running them through anomaly detection, adversarial robustness checks, and ideally, retraining them on your own verified data.

Q3: How difficult are these attacks to detect in a real-world cloud ML environment?

A3: Detecting adversarial AI data attacks, particularly subtle poisoning, is notoriously difficult. Attackers often aim for stealth, making minimal changes that are hard to distinguish from natural data noise or drift. This is compounded in dynamic cloud environments with continuous learning. It requires sophisticated monitoring, anomaly detection specific to data distributions and model behavior, and often specialized tools that go beyond traditional cybersecurity measures.

Q4: Is there any regulatory guidance available for AI security, especially regarding data integrity?

A4: Yes, the regulatory landscape for AI is evolving rapidly. While specific laws for “AI data poisoning” are still emerging, existing regulations like GDPR and CCPA cover data integrity and privacy, which are directly impacted by poisoning. Furthermore, bodies like NIST (National Institute of Standards and Technology) provide frameworks and guidelines, such as their AI Risk Management Framework, which emphasize robust data governance, transparency, and security throughout the AI lifecycle. Industry-specific regulations (e.g., in finance or healthcare) also impose strict requirements on the reliability and trustworthiness of AI systems.

Q5: What’s the first step an organization should take to address poisoned pipelines?

A5: The first critical step is to conduct a comprehensive threat assessment and risk analysis of your existing ML pipelines. Identify all data ingestion points, third-party dependencies, and model training processes. Prioritize areas of highest risk based on data sensitivity and model criticality. Simultaneously, educate your ML engineering and data science teams on the nature of these threats and integrate basic data validation and provenance tracking into your immediate development practices. This foundational understanding and initial assessment are crucial for building an effective, long-term defense strategy.

Conclusion: Securing the Future of AI

The promise of Artificial Intelligence is immense, but its realization hinges on our ability to build and deploy trustworthy systems. Adversarial AI data attacks, particularly poisoning, represent a profound and often invisible threat to this trust, capable of undermining models, compromising critical decisions, and inflicting significant damage. As AI permeates every facet of our digital lives, the security of its underlying data pipelines in cloud environments is no longer an afterthought; it is a paramount concern.

Organizations must adopt a proactive, multi-layered defense strategy, integrating robust data validation, secure pipeline architectures, resilient models, and continuous monitoring. This necessitates a cultural shift, embedding security consciousness into every stage of the ML lifecycle and empowering technical teams with the knowledge and tools to combat these sophisticated threats. By understanding the insidious nature of poisoned pipelines and implementing comprehensive safeguards, we can collectively secure the integrity of our AI systems and ensure a future where AI continues to be a force for good. Partnering with experts who specialize in cloud ML security is no longer an option but a strategic imperative to navigate this complex landscape effectively.