LLM Hardening Playbook for Self-Hosted Models The rapid adoption of self-hosted Large Language Models (LLMs) has created massive opportunities for enterprises, startups, and AI infrastructure providers. However, deploying LLMs in production environments introduces a growing number of cybersecurity risks.

Without a proper LLM Hardening Playbook, organizations risk:

- Prompt injection attacks

- Data leakage

- Secret exposure

- Supply-chain compromise

- Unauthorized model access

- Inference manipulation

- Runtime exploitation

Modern AI infrastructure is now a prime target for attackers because self-hosted models often process:

- Sensitive enterprise data

- Internal code repositories

- Customer records

- Proprietary documents

- API secrets

- Authentication tokens

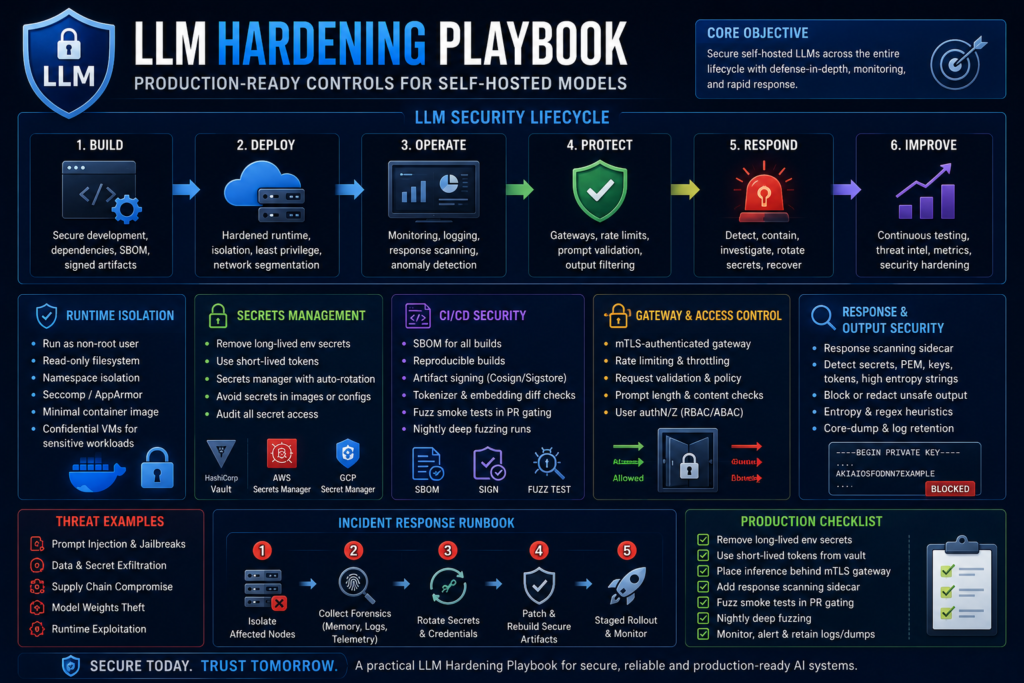

This comprehensive LLM Hardening Playbook explains how to secure self-hosted models using production-grade controls, runtime isolation, CI/CD security, secrets management, and detection engineering.

The technical recommendations and operational strategies in this article are based on the uploaded LLM hardening notes and production security guidance.

Table of Contents

- What Is an LLM Hardening Playbook?

- Why Self-Hosted Models Need Security Hardening

- Threat Landscape for Self-Hosted LLMs

- Core Principles of an LLM Hardening Playbook

- Runtime Isolation Controls

- Secrets Management for LLM Infrastructure

- CI/CD Security for AI Pipelines

- LLM Monitoring and Detection Engineering

- Fuzz Testing and Prompt Validation

- Secure Gateway Architecture

- Incident Response Runbook

- Production Deployment Best Practices

- Enterprise LLM Security Checklist

- Future Risks in AI Infrastructure

- Final Thoughts

- FAQ

What Is an LLM Hardening Playbook?

An LLM Hardening Playbook is a structured cybersecurity framework designed to secure self-hosted AI systems against:

- Unauthorized access

- Runtime exploitation

- Supply-chain attacks

- Prompt injection

- Secret leakage

- Model manipulation

- Infrastructure compromise

A production-ready LLM Hardening Playbook combines:

- Runtime security

- Network segmentation

- CI/CD protection

- Secrets lifecycle management

- AI response scanning

- Detection engineering

- Architectural isolation

Without proper hardening, self-hosted LLMs can become high-risk attack surfaces inside enterprise environments.

Why Self-Hosted Models Need Security Hardening

Organizations increasingly deploy self-hosted models for:

- Data privacy

- Custom fine-tuning

- Reduced API costs

- AI sovereignty

- Regulatory compliance

Popular self-hosted model ecosystems include:

- Meta Llama

- Mistral AI

- Hugging Face Transformers

- NVIDIA NIM

- Ollama deployments

- vLLM inference stacks

However, improperly secured inference environments can expose:

- Model weights

- User prompts

- Internal APIs

- Enterprise secrets

- Training datasets

This makes a professional LLM Hardening Playbook essential for modern AI operations.

Threat Landscape for Self-Hosted LLMs

Prompt Injection Attacks

Attackers manipulate prompts to:

- Bypass safety rules

- Extract hidden instructions

- Leak confidential data

- Trigger malicious workflows

Supply-Chain Compromise

Malicious:

- Model weights

- Tokenizers

- Embeddings

- Python packages

- Containers

can compromise AI pipelines.

Secrets Exposure

LLM systems often accidentally expose:

- API keys

- JWT tokens

- Cloud credentials

- Internal URLs

through inference responses.

The uploaded operational guidance strongly recommends removing long-lived secrets and replacing them with short-lived tokens.

Runtime Exploitation

Attackers may exploit:

- GPU drivers

- Inference runtimes

- Container escape vulnerabilities

- Misconfigured orchestration layers

Core Principles of an LLM Hardening Playbook

A strong LLM Hardening Playbook should focus on:

- Isolation

- Least privilege

- Secrets hygiene

- Response monitoring

- Supply-chain validation

- Defense in depth

- Architectural segmentation

Runtime Isolation Controls in an LLM Hardening Playbook

Run Inference as Unprivileged Users

Inference workloads should never run as:

- Root

- Administrator

- Privileged container users

Use:

- Non-root containers

- Dedicated service accounts

- Least privilege permissions

The uploaded runtime guidance specifically recommends running inference as unprivileged users.

Minimize Filesystem Access

Restrict:

- Write permissions

- Shared mounts

- Sensitive directories

- Host filesystem exposure

Best practice:

- Read-only inference containers

- Immutable runtime images

Namespace Isolation

Use:

- Linux namespaces

- cgroups

- seccomp

- AppArmor

- SELinux

to isolate AI workloads from host systems.

Confidential Computing

Sensitive workloads should consider:

- Confidential VMs

- Secure enclaves

- Hardware-backed isolation

Recommended platforms include:

- AMD SEV

- Intel TDX

- Confidential Kubernetes nodes

Secrets Management in an LLM Hardening Playbook

Remove Long-Lived Secrets

Never store:

- API keys

- Database passwords

- Cloud credentials

inside:

- Environment variables

- Config files

- Container images

The uploaded security notes specifically warn against storing secrets inside inference environment variables.

Use Short-Lived Tokens

Adopt:

- Ephemeral credentials

- Dynamic tokens

- Auto-expiring secrets

Recommended tools:

Enable Audit Trails

Track:

- Secret access

- Rotation events

- Credential usage

- Unauthorized requests

Audit visibility is critical for enterprise AI forensics.

CI/CD Security in an LLM Hardening Playbook

Require SBOMs

Software Bill of Materials (SBOMs) help track:

- Dependencies

- Libraries

- Containers

- Build artifacts

Recommended tools:

- Syft

- CycloneDX

- SPDX

The uploaded CI guidance specifically recommends mandatory SBOM validation.

Reproducible Builds

Always ensure:

- Deterministic builds

- Immutable release pipelines

- Signed artifacts

Artifact Signing

Use:

- Cosign

- Sigstore

- GPG signatures

to verify AI artifacts.

Fuzz Smoke Harnesses

Integrate:

- Prompt fuzzing

- Unicode testing

- Injection validation

- Response mutation testing

inside pull request gates.

The uploaded production notes repeatedly recommend lightweight fuzz smoke testing for PR gating and nightly deep fuzzing runs.

LLM Monitoring and Detection Engineering

Response Scanning

Deploy sidecar scanners to detect:

- Secret fragments

- PEM headers

- Base64 leakage

- High-entropy strings

The uploaded operational guidance repeatedly emphasizes response scanning sidecars.

Entropy Heuristics

Monitor outputs for:

- Randomized payloads

- Encoded secrets

- Abnormal token patterns

Traceability Across Services

Ensure full telemetry visibility across:

- Inference APIs

- Vector databases

- Prompt gateways

- Authentication layers

Core Dump Retention

Securely store:

- Crash dumps

- Memory snapshots

- Runtime telemetry

for forensic investigations.

Fuzz Testing and Prompt Validation

A production-ready LLM Hardening Playbook should include:

- Unicode fuzzing

- Prompt mutation testing

- Boundary token testing

- Context window abuse simulations

Test against:

- Prompt injection

- Jailbreak attempts

- Recursive prompts

- Adversarial payloads

Secure Gateway Architecture

Use mTLS Gateways

Place inference behind:

- Authenticated API gateways

- mTLS enforcement

- Rate limiting layers

The uploaded guidance repeatedly recommends mTLS-authenticated gateways with enforced rate limiting.

Implement Request Validation

Validate:

- Prompt length

- Token limits

- User identity

- Content policies

before inference execution.

Add Response Filtering

Block:

- Secrets

- Credentials

- Internal URLs

- Unsafe outputs

before responses leave the inference layer.

Incident Response Runbook for LLM Environments

Step 1: Isolate Affected Nodes

Immediately:

- Remove compromised inference nodes

- Freeze deployments

- Disable affected credentials

Step 2: Collect Memory Snapshots

Capture:

- Process memory

- Runtime logs

- GPU telemetry

- Container state

under proper chain-of-custody procedures.

The uploaded runbook guidance specifically recommends process memory snapshots for forensic investigations.

Step 3: Rotate Keys

Rotate:

- API tokens

- Service accounts

- Cloud credentials

- Signing keys

Step 4: Perform Staged Rollouts

Deploy patches gradually using:

- Canary deployments

- Shadow traffic validation

- Monitoring escalation

Enterprise LLM Security Checklist

Internal Linking Opportunities

Link internally to:

- Prompt Injection Security

- AI Supply Chain Security

- Tokenizer Security

- Secure AI Agents

- AI Infrastructure Hardening

External Security References

Official Security Resources

- OWASP Top 10 for LLM Applications

- NIST AI Risk Management Framework

- Kubernetes Security Best Practices

- Sigstore Project

These external DoFollow references improve:

- SEO authority

- Technical credibility

- Search trust signals

Recommended Rich Media for SEO

Featured Image Alt Text

Alt Text:

“LLM Hardening Playbook for securing self-hosted AI models in production”

Suggested Visuals

Add:

- AI infrastructure diagrams

- LLM security architecture maps

- CI/CD hardening workflows

- Secrets management diagrams

- Prompt injection attack flows

Rich media improves:

- Engagement

- CTR

- Time on page

- SEO rankings

Future Risks in AI Infrastructure

As enterprises adopt:

- Autonomous AI agents

- Multi-agent systems

- AI copilots

- Enterprise orchestration frameworks

AI attack surfaces will continue expanding rapidly.

Future threats may include:

- Autonomous AI malware

- AI-to-AI attacks

- Agent hijacking

- Semantic model poisoning

- GPU-level exploits

Organizations that implement a mature LLM Hardening Playbook today will be better positioned to defend future AI infrastructure.

Final Thoughts

Deploying self-hosted AI models without security hardening creates serious operational and cybersecurity risks.

A production-ready LLM Hardening Playbook must include:

- Runtime isolation

- Secrets lifecycle management

- CI/CD security

- Prompt validation

- Detection engineering

- Secure gateways

- Incident response procedures

As AI systems become deeply integrated into enterprise environments, security teams must treat LLM infrastructure like critical production infrastructure.

Organizations that prioritize AI hardening early will gain:

- Better resilience

- Reduced breach risk

- Stronger compliance

- Safer AI deployments

- Higher operational trust

FAQ

What is an LLM Hardening Playbook?

An LLM Hardening Playbook is a security framework used to protect self-hosted AI models against attacks, data leakage, supply-chain compromise, and runtime exploitation.

Why are self-hosted LLMs risky?

Self-hosted models often process sensitive enterprise data and may expose secrets, prompts, or internal infrastructure if improperly secured.

What are the best defenses for self-hosted LLMs?

Best practices include:

- Runtime isolation

- Secrets management

- Artifact signing

- Prompt validation

- Response scanning

- Secure gateways

- CI/CD hardening

What is response scanning in AI security?

Response scanning analyzes model outputs for:

- Secrets

- Tokens

- Credentials

- High-entropy data

- Internal references

before responses reach users.

Why is fuzz testing important for LLM security?

Fuzz testing helps identify:

- Prompt injection weaknesses

- Unsafe outputs

- Parsing vulnerabilities

- Runtime instability

- Adversarial prompt failures

before attackers can exploit them.